ZFS

Is a file system used in few Operating System, mostly for storage & backup use-cases.

Links: - Very good first introduction by TrueNAS - A Closer Look at ZFS, Vdevs and Performance - ZFS 101—Understanding ZFS storage and performance - Ars Technica - Deep-dive on ZFS on Linux - Proxmox Wiki

Introduction¶

See the introduction done by users in TrueNAS forum:

Concepts¶

Capabilities¶

- Copy on Write filesystem

- Snapshots

- Self-healing

- RAIDZ

- The number after the RAIDZ indicates how many disks per vdev you can lose without losing data

- RAIDZ1

- Not recommanded with disks > 1 Tib

Terminology¶

vdev= virtual device- for data, possibilities:

- single disk - stripe: send all writes to all disks in parallel, for aggregated workflow (not at the app level)

- 2+ disks mirrored

- n-1 disk can be lost without data lost

- support silent corruption fix, based on the other disk and checksum

- goes as fast as the slowest disk in write

- goes quicker in read: aggregate IOPS of all disks

- group of disks in RAIDZ

- any number of data disk, defined (1 to 3) parity disks

- write

- one block sliced up across disks

- doesn't use all data disks if necessary

- no write hole due to Copy on Write, as uberblock is last operation (unlike RAID-5)

- no battery backup necessary

- IOPS of the slowest disk

- read

- IOPS of the slowest disk

- Recommended 3 to 9 disk per vdev

- The smallest disk size is used

- Disk capacity

- At 90% capacity, switch from performance to space optimization

- other type - taken from here

- DATA: A VDEV used to store the Data stored in the Pool and its Datasets

- Cache: A VDEV used for L2ARC Cache, optional and only useful if RAM is maxed out

- LOG: A dedicated VDEV for ZFS’s intent log, can improve performance

- Hot Spare: A VDEV for spare Disks that can automatically replace broken ones in Data VDEVs

- Metadata: A dedicated VDEV to store Metadata

- Dedup: A dedicated VDEV to Store deduplication data (Deduplication is not recommended)

- for data, possibilities:

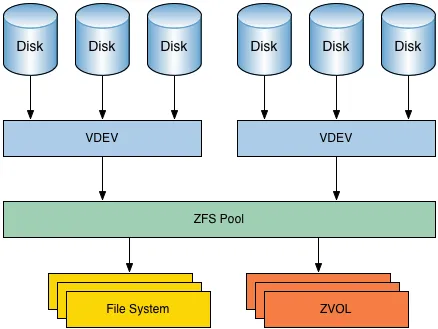

pool= 1+vdev- Can be encrypted

- Contains datasets ≈ partitions

- Can be encrypted

- Snapshot

- Quotas

- Contains

zvol: block devices for swap or VM disks

Notes¶

- On ECC Memory Error Correction

- Should have an ECC memory - but the advice is not a must, and advisable for any file system

- See also Will ZFS and non-ECC RAM kill your data? - JRS Systems: the blog → TL;DR: no.

- Should have an ECC memory - but the advice is not a must, and advisable for any file system

- On Encryption

- See TrueNAS Encryption documentation

- Don't use it at the pool level if you have only 1 pool

- On system design

- Check out the TrueNAS Community Hardware Guide

- And also TrueNAS Hardware Guide

RAM¶

- Consume a lot of RAM with deduplication: ~1GB per TB per physical disk; minimum 8 Gb - source

- Adaptative Replacement Cache uses 50% of the host memory by default, but can be configured (see Proxmox Wiki)

- Rule of thumb: 2 GiB base + 1 GiB for each TiB of storage

- Change

zfs_arc_max- Temporary change:

echo "$[4 * 1024*1024*1024]" >/sys/module/zfs/parameters/zfs_arc_maxfor 10 GiB - Permanent change:

- Create/Edit

/etc/modprobe.d/zfs.conf - Add

options zfs zfs_arc_max=4294967296(this sets to 4 GiB) - Update your

initramfswithupdate-initramfs -u -k all

- Create/Edit

- Temporary change:

zfs_arc_minmust be ≤ tozfs_arc_max- Default: 1/32 of system memory